Publications

publications by categories in reversed chronological order.

2026

- Evaluating Large Vision Language Models on Bangla Medical Visual Question AnsweringRafid Ahmed, Intesar Tahmid, Mir Sazzat Hossain, and 5 more authorsIn Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2-7 july 2026

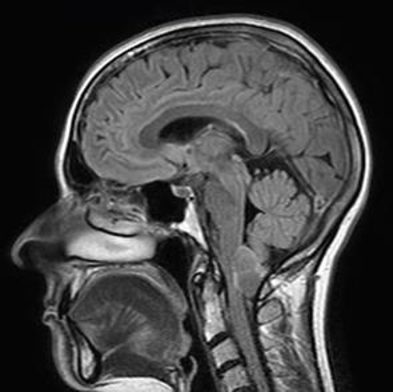

Recent advancements in Large Language Models (LLMs) and Large Vision Language Models (LVLMs) have enabled general-purpose systems to demonstrate promising capabilities in complex reasoning tasks, including those in the medical domain. However, their evaluation has predominantly focused on high-resource languages, leaving low-resource contexts like Bangla underexplored. To address this gap, we introduce BanglaMedVQA, a multilingual Medical Visual Question Answering (VQA) dataset comprising clinically validated image–question–answer pairs, along with a comprehensive evaluation of current LVLMs on this resource. We rigorously evaluate nine state-of-the-art LVLMs using zero-shot, Chain-of-Thought (CoT), and LoRA fine-tuning strategies. Our results reveal a clear performance disparity: models perform well on generalized visual tasks but struggle with fine-grained diagnostic reasoning, achieving surprisingly low accuracy in specialized categories. While fine-tuning significantly improves overall accuracy, especially for Qwen2.5-VL, limitations in specialized medical reasoning persist. Our work provides a foundation for future research in Bangla medical VQA.

@inproceedings{ahmed-etal-2026-banglamedvqa, title = {Evaluating Large Vision Language Models on Bangla Medical Visual Question Answering}, author = {Ahmed, Rafid and Tahmid, Intesar and Hossain, Mir Sazzat and Tomal, Tasnimul Hossain and Jawad, Md Mahir and Uddin, Anam Borhan and Fahim, Md and Bhuiyan, Md Farhad Alam}, booktitle = {Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)}, month = {2-7 July}, year = {2026}, address = {San Diego, California, USA}, publisher = {Association for Computational Linguistics}, url = {https://ahmedrafid023.github.io/med-vqa-page/}, } - How Good LLMs Are at Answering Bangla Medical Visual Questions? Dataset and BenchmarkingRafid Ahmed, Intesar Tahmid, Mir Sazzat Hossain, and 3 more authorsIn Proceedings of The Second AAAI Bridge Program on AI for Medicine and Healthcare, 20–21 jan 2026

Recent advancements in Large Language Models (LLMs) and Large Vision Language Models (LVLMs) have enabled general-purpose systems to demonstrate promising capabilities in complex reasoning tasks, including those in the medical domain. Medical Visual Question Answering (MedVQA) has particularly benefited from these developments. However, despite Bangla being one of the most widely spoken languages globally, there exists no established MedVQA benchmark for it. To address this gap, we introduce BanglaMedVQA, a dataset comprising clinically validated image–question–answer pairs, along with a comprehensive evaluation of current foundation models on this resource. Consistent with prior findings that report low performance of current models on English MedVQA benchmarks, our analysis reveals that Bangla performance is substantially lower, reflecting the challenges inherent to low-resource languages. Even top-performing models such as Gemini and GPT-4.1 mini fail to accurately answer specialized diagnostic questions, indicating severe limitations in fine-grained medical reasoning. Although certain open-source models, such as Gemma-3, occasionally outperform these models in general categories, they too struggle with clinically complex ques- tions, underscoring the urgent need for top-notch evaluation method.

@inproceedings{pmlr-v317-ahmed26a, title = {How Good LLMs Are at Answering Bangla Medical Visual Questions? Dataset and Benchmarking}, author = {Ahmed, Rafid and Tahmid, Intesar and Hossain, Mir Sazzat and Tomal, Tasnimul Hossain and Fahim, Md and Bhuiyan, Md Farhad Alam}, booktitle = {Proceedings of The Second AAAI Bridge Program on AI for Medicine and Healthcare}, pages = {1--14}, year = {2026}, editor = {Wu, Junde and Pan, Jiazhen and Zhu, Jiayuan and Luo, Luyang and Li, Yitong and Xu, Min and Jin, Yueming and Rueckert, Daniel}, volume = {317}, series = {Proceedings of Machine Learning Research}, month = {20--21 Jan}, publisher = {PMLR}, url = {https://proceedings.mlr.press/v317/ahmed26a.html}, }

2025

- Benchmarking Large Language Models on Bangla Dialect Translation and Dialectal Sentiment AnalysisMd Mahir Jawad, Rafid Ahmed, Ishita Sur Apan, and 4 more authorsIn Proceedings of the IJCNLP 2025 Second Workshop on Bangla Language Processing (BLP-2025), Dec 2025

We are honored to receive the Best Long Paper Award. View Certificate

We present a novel Bangla Dialect Dataset comprising 600 annotated instances across four major dialects: Chattogram, Barishal, Sylhet, and Noakhali. The dataset was constructed from YouTube comments spanning diverse domains to capture authentic dialectal variations in informal online communication. Each instance includes the original dialectical text, its standard Bangla translation, and sentiment labels (Positive and Negative). We benchmark several state-of-the-art large language models on dialect-to-standard translation and sentiment analysis tasks using zero-shot and few-shot prompting strategies. Our experiments reveal that transliteration significantly improves translation quality for closed-source models, with GPT-4o-mini achieving the highest BLEU score of 0.343 in zero-shot with transliteration. For sentiment analysis, GPT-4o-mini demonstrates perfect precision, recall, and F1 scores (1.000) in few-shot settings. This dataset addresses the critical gap in resources for low-resource Bangla dialects and provides a foundation for developing dialect-aware NLP systems.

@inproceedings{jawad-etal-2025-benchmarking, title = {Benchmarking Large Language Models on {B}angla Dialect Translation and Dialectal Sentiment Analysis}, author = {Jawad, Md Mahir and Ahmed, Rafid and Apan, Ishita Sur and Tomal, Tasnimul Hossain and Haider, Fabiha and Hossain, Mir Sazzat and Bhuiyan, Md Farhad Alam}, editor = {Alam, Firoj and Kar, Sudipta and Chowdhury, Shammur Absar and Hassan, Naeemul and Prince, Enamul Hoque and Tasnim, Mohiuddin and Rony, Md Rashad Al Hasan and Rahman, Md Tahmid Rahman}, booktitle = {Proceedings of the IJCNLP 2025 Second Workshop on Bangla Language Processing (BLP-2025)}, month = dec, year = {2025}, address = {Mumbai, India}, publisher = {Association for Computational Linguistics}, url = {https://aclanthology.org/2025.banglalp-1.26/}, pages = {322--337}, isbn = {979-8-89176-314-2} } - PentaML at BLP-2025 Task 1: Linear Probing of Pre-trained Transformer-based Models for Bangla Hate Speech DetectionIntesar Tahmid, Rafid Ahmed, Md Mahir Jawad, and 3 more authorsIn Proceedings of the IJCNLP 2025 Second Workshop on Bangla Language Processing (BLP-2025), Dec 2025

This paper presents our approach for the BLP Shared Task 1, where we implemented Linear Probing of Pre-trained Transformer-based Models for Bangla Hate Speech Detection. The goal of the task was to customize the existing models so that they’re capable of automatically identifying hate speech in Bangla social media text, with a focus on YouTube comments. Our approach relied on fine-tuning several pre-trained BERT models, adapting them to the shared task dataset for improved classification accuracy. To further enhance performance, we applied linear probing on three of the fine-tuned models, enabling more effective utilization of the learned representations. The combination of these strategies resulted in a consistent top-15 ranking across all subtasks of the competition. Our findings highlight the effectiveness of linear probing as a lightweight yet impactful technique for enhancing hate speech detection in low-resource languages like Bangla.

@inproceedings{tahmid-etal-2025-pentaml, title = {{P}enta{ML} at {BLP}-2025 Task 1: Linear Probing of Pre-trained Transformer-based Models for {B}angla Hate Speech Detection}, author = {Tahmid, Intesar and Ahmed, Rafid and Jawad, Md Mahir and Uddin, Anam Borhan and Fahim, Md and Bhuiyan, Md Farhad Alam}, editor = {Alam, Firoj and Kar, Sudipta and Chowdhury, Shammur Absar and Hassan, Naeemul and Prince, Enamul Hoque and Tasnim, Mohiuddin and Rony, Md Rashad Al Hasan and Rahman, Md Tahmid Rahman}, booktitle = {Proceedings of the IJCNLP 2025 Second Workshop on Bangla Language Processing (BLP-2025)}, month = dec, year = {2025}, address = {Mumbai, India}, publisher = {Association for Computational Linguistics}, url = {https://aclanthology.org/2025.banglalp-1.50/}, pages = {538--543}, isbn = {979-8-89176-314-2} }