Convolutional Neural Network (CNN): Detailed Mechanics and Visualization

Convolutional Neural Networks (CNNs) are a class of deep learning models particularly effective for processing grid-like data such as images. This note summarizes the fundamental concepts of CNNs, including the structure of convolutional layers, the role of kernels and filters, multi-channel inputs, and the computation of feature maps through dot products and summations across channels.

It also covers how CNNs capture spatial hierarchies in data and the intuition behind pooling and activation functions. Illustrative numeric examples are included to demonstrate the convolution operation and multi-channel processing. This note serves as a practical reference for understanding the inner workings of CNNs and their applications in image recognition and related tasks.

Introduction

Convolutional Neural Networks (CNNs) are a class of deep neural networks specifically designed to process grid-structured data such as images. They leverage spatial correlations, hierarchical feature learning, and parameter sharing to extract meaningful patterns with high efficiency. CNNs form the backbone of many state-of-the-art systems in image classification, object detection, and more.

Input Representation

An image is represented as a three-dimensional tensor:

\[\mathbf{X} \in \mathbb{R}^{H \times W \times C}\]where $H$ is the height, $W$ is the width, and $C$ is the number of channels (e.g., $C=1$ for grayscale and $C=3$ for RGB color images).

For an RGB image:

\[\mathbf{X} = \big[\,\mathbf{X}^{(R)},\,\mathbf{X}^{(G)},\,\mathbf{X}^{(B)}\,\big]\]Each channel represents a stacked matrix capturing different color components.

Convolutional Layer

The convolutional layer is the core computational unit of a CNN. It consists of a set of learnable filters (or kernels), each designed to detect specific features such as edges, textures, and shapes at various spatial locations.

A single filter has dimensions:

\[\mathbf{W} \in \mathbb{R}^{k \times k \times C}\]with an associated scalar bias $b$.

Convolution Operation

At a given spatial location $(i,j)$, a convolution computes the inner product between the filter weights and the corresponding local patch of the input across all channels:

\[Z_{i,j} = \sum_{c=1}^{C} \sum_{m=0}^{k-1} \sum_{n=0}^{k-1} X^{(c)}_{i+m,j+n} \cdot W^{(c)}_{m,n} + b\]Channel Summation Intuition

A single filter is meant to detect one type of feature across all channels. Each channel provides partial evidence of the feature — for example, an edge might be strong in the red channel and weaker in green or blue. Summing the dot products aggregates this evidence into a single activation value, representing the confidence that the feature exists at that location.

Multiple Filters

Using $N$ filters produces $N$ output feature maps:

\[\text{Output shape} = H' \times W' \times N\]Each filter learns to recognize a distinct feature pattern.

Non-linearity: Activation Functions

After convolution, an element-wise non-linear activation is applied. The Rectified Linear Unit (ReLU) is the most common choice:

\[A_{i,j} = \mathrm{ReLU}(Z_{i,j}) = \max(0, Z_{i,j})\]This introduces non-linearity and enables the network to approximate complex functions.

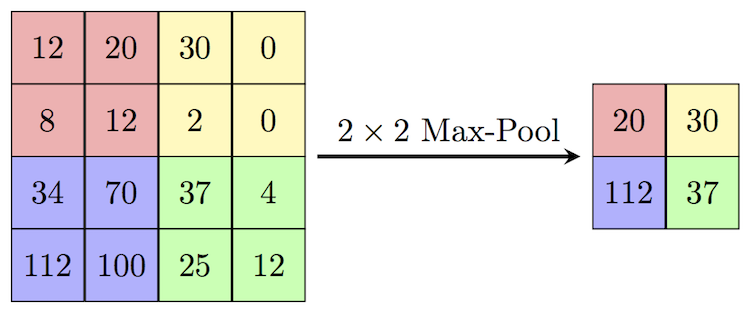

Pooling Layer

Pooling layers reduce spatial dimensions (height and width) to achieve translation invariance and reduce computation. For a pool size of $p \times p$:

\[P_{i,j} = \max_{0 \le m,n < p} A_{i+m,j+n}\]

Hierarchical Abstraction

CNNs learn hierarchical representations through successive application of convolution, non-linearity, and pooling. Early layers capture low-level features such as edges and corners. Intermediate layers combine these into motifs like textures, and deeper layers form high-level concepts such as object parts.

Interactive visualization techniques—such as those developed by Harley—provide deep insight into these representations. For example, an interactive node visualization platform can be explored at: https://adamharley.com/nn_vis/cnn/3d.html

This 3D visualization shows how activations propagate throughout a CNN’s layers when processing an input image.

Flattening and Fully Connected Layers

After convolutional and pooling layers, the feature maps are flattened into a vector:

\[\mathbf{f} \in \mathbb{R}^{d}\]This vector is passed to one or more fully connected layers:

\[\mathbf{h} = \mathbf{W}_{fc} \cdot \mathbf{f} + \mathbf{b}_{fc}\]followed by an activation function.

Output Layer and Softmax

For classification into $K$ classes, the final layer produces logits:

\[\mathbf{o} = \mathbf{W}_{out} \cdot \mathbf{h} + \mathbf{b}_{out}\]The Softmax function converts logits into probabilities:

\[\hat{y}_k = \frac{e^{o_k}}{\sum_{j=1}^{K} e^{o_j}}\]with $\sum_{k=1}^{K} \hat{y}_k = 1$.

Loss Function and Learning

The network is trained by minimizing a loss function, such as cross-entropy for classification:

\[\mathcal{L} = -\sum_{k=1}^{K} y_k \log(\hat{y}_k)\]where $\mathbf{y}$ is the one-hot ground truth vector.

Training uses gradient descent and backpropagation:

\[W \leftarrow W - \eta \frac{\partial \mathcal{L}}{\partial W}, \quad b \leftarrow b - \eta \frac{\partial \mathcal{L}}{\partial b}\]where $\eta$ is the learning rate.

Interactive Visualization of CNN Behavior

Interactive visualizations offer educational insights into network dynamics by allowing users to manipulate input and observe layer activations. For example, an interactive node-link visualization designed by Harley shows real-time activations of a CNN trained on handwritten digits, helping students see how input perturbations alter intermediate representations and final predictions. Such tools reveal:

- Activation strength and patterns across layers.

- How filters respond to different input features.

- The hierarchical transformation from pixels to high-level classification.

Summary of the CNN Pipeline

\[\text{Input} \rightarrow \text{Convolution} \rightarrow \text{ReLU} \rightarrow \text{Pooling} \rightarrow \text{Flatten} \rightarrow \text{Fully Connected} \rightarrow \text{Softmax}\]This pipeline produces a robust, hierarchical transformation from raw image data to semantic predictions.

Example Walkthroughs

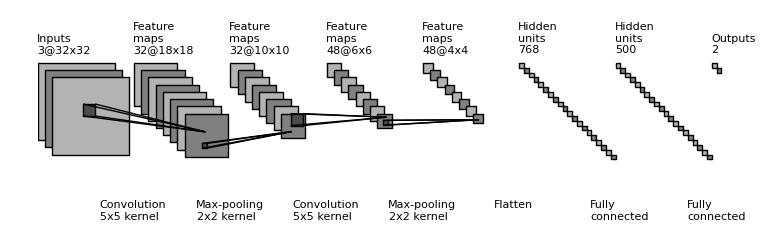

1. Architecture Example: Digit Recognition

Input: A $28\times28$ grayscale image of a handwritten digit (e.g., “7”).

- Convolutional Layer 1: 6 filters of size $5\times5$, stride $1$, no padding.

- Input shape: $28\times28\times1$

- Output shape: $24\times24\times6$ (since $28-5+1=24$)

- Activation: ReLU applied → same shape: $24\times24\times6$.

- Pooling Layer 1: $2\times2$ max pooling, stride $2$.

- Output shape: $12\times12\times6$.

- Convolutional Layer 2: 16 filters of size $5\times5$, stride $1$, no padding.

- Input shape: $12\times12\times6$

- Output shape: $8\times8\times16$.

- Activation: ReLU → $8\times8\times16$.

- Pooling Layer 2: $2\times2$ max pooling, stride $2$.

- Output shape: $4\times4\times16$.

- Flatten: $4\times4\times16 = 256$ neurons.

- Fully Connected Layer 1: 120 neurons.

- Fully Connected Layer 2: 84 neurons.

- Output Layer (Softmax): 10 neurons (digits $0$–$9$).

Output Probabilities:

\[\text{Output probabilities} = \begin{bmatrix} p_0 \\ p_1 \\ p_2 \\ p_3 \\ p_4 \\ p_5 \\ p_6 \\ p_7 \\ p_8 \\ p_9 \end{bmatrix} = \begin{bmatrix} 0.01 \\ 0.02 \\ 0.03 \\ 0.05 \\ 0.04 \\ 0.08 \\ 0.10 \\ \mathbf{0.50} \\ 0.12 \\ 0.05 \end{bmatrix}\]The network predicts class 7 with highest probability ($p_7 = 0.50$).

2. Calculation Example: 2D Convolution

Input image:

\[\mathbf{X} = \begin{bmatrix} 1 & 2 & 0 & 1 & 0 & 2 \\ 0 & 1 & 2 & 1 & 1 & 0 \\ 1 & 0 & 1 & 2 & 0 & 1 \\ 2 & 1 & 0 & 1 & 1 & 2 \end{bmatrix}\]Kernel (3x3):

\[\mathbf{K} = \begin{bmatrix} 1 & 0 & -1 \\ 0 & 1 & 0 \\ -1 & 0 & 1 \end{bmatrix}\]Convolution operation (top-left position):

\[\begin{aligned} \text{output}_{1,1} &= (1\cdot1) + (2\cdot0) + (0\cdot(-1)) \\ &+ (0\cdot0) + (1\cdot1) + (2\cdot0) \\ &+ (1\cdot(-1)) + (0\cdot0) + (1\cdot1) \\ &= 2 \end{aligned}\]Next position (top-middle, shift by 1 column):

\[\begin{aligned} \text{output}_{1,2} &= (2\cdot1) + (0\cdot0) + (1\cdot(-1)) \\ &+ (1\cdot0) + (2\cdot1) + (1\cdot0) \\ &+ (0\cdot(-1)) + (1\cdot0) + (2\cdot1) \\ &= 5 \end{aligned}\]Output feature map (shape $2\times4$ for stride 1, no padding):

\[\mathbf{Y} = \begin{bmatrix} 2 & 5 & ? & ? \\ ? & ? & ? & ? \end{bmatrix}\]3. Calculation Example: 3D Convolution (RGB Input)

Instead of a single grid, let’s look at how convolution works on a 3-channel image (like an RGB image).

Input Volume ($\mathbf{X}$): A $3 \times 3$ image with 3 channels ($R, G, B$). Shape: $3 \times 3 \times 3$.

\[\mathbf{X}_{R} = \begin{bmatrix} 1 & 0 & 1 \\ 0 & 1 & 0 \\ 1 & 0 & 1 \end{bmatrix} \quad \mathbf{X}_{G} = \begin{bmatrix} 1 & 1 & 0 \\ 1 & 0 & 1 \\ 0 & 1 & 1 \end{bmatrix} \quad \mathbf{X}_{B} = \begin{bmatrix} 0 & 1 & 0 \\ 1 & 1 & 0 \\ 0 & 0 & 2 \end{bmatrix}\]Filter/Kernel ($\mathbf{W}$): A $2 \times 2$ filter, which also must have 3 channels to match the input depth. Shape: $2 \times 2 \times 3$. Bias $b = 1$.

\[\mathbf{W}_{R} = \begin{bmatrix} 1 & -1 \\ 0 & 1 \end{bmatrix} \quad \mathbf{W}_{G} = \begin{bmatrix} 0 & 1 \\ -1 & 1 \end{bmatrix} \quad \mathbf{W}_{B} = \begin{bmatrix} 1 & 0 \\ 0 & -1 \end{bmatrix}\]Convolution Operation (Top-Left Position):

To calculate the single output pixel at position $(0,0)$, we calculate the dot product for each channel separately and then sum them all up + bias.

Red Channel Contribution: \((1 \cdot 1) + (0 \cdot -1) + (0 \cdot 0) + (1 \cdot 1) = 1 + 0 + 0 + 1 = 2\)

Green Channel Contribution: \((1 \cdot 0) + (1 \cdot 1) + (1 \cdot -1) + (0 \cdot 1) = 0 + 1 - 1 + 0 = 0\)

Blue Channel Contribution: \((0 \cdot 1) + (1 \cdot 0) + (1 \cdot 0) + (1 \cdot -1) = 0 + 0 + 0 - 1 = -1\)

Final Result ($Z_{0,0}$): \(Z_{0,0} = (\text{Red} + \text{Green} + \text{Blue}) + \text{Bias}\) \(Z_{0,0} = (2 + 0 - 1) + 1 = \mathbf{2}\)

Output Feature Map: Since input is $3 \times 3$ and kernel is $2 \times 2$ (stride 1, no padding), the output will be $2 \times 2$.

\[\mathbf{Y} = \begin{bmatrix} \mathbf{2} & ? \\ ? & ? \end{bmatrix}\]